Setting ourselves up for exploitation: RL in the wild

How simulator exploitation, a dual of over-optimization, in AI is the canary in the coal mine for what negative implications could come from weakly-bounded, data-driven iterative systems (RL).

I had a viral thread on Twitter (paper post for On the Importance of Hyperparameter Optimization for Model-based Reinforcement Learning), which is now a proud member of the “Specification gaming examples in AI” Hall of Fame from DeepMind. This Hall of Fame tracks examples of artificial intelligences, AIs, that behave in ways that are unimaginable to their creators to solve a narrowly specified task. This problem of mismatch between designer specification and AI, or agent, actualization is only going to grow in the coming years.

Exploitation is the idea that the solution for a task has unintended behavior. Unintended behavior involves different levels of risk depending on the environment the agent operates in.

Reinforcement learning’s (RL) setup as a framework and open-ended optimization of scalar (following the famous, but contentious reward hypothesis) reward functions makes it poised for such exploitations (for more on RL and why it is a difficult optimization to bound, you can see my past article). RL is a general, data-driven optimizer, and we are in a time when two conditions represent a tenuous situation for exploitation:

RL algorithms, at a theoretical level, are improving every year and many machine learning breakthroughs have been accelerating (see Transformers, or RL & Transformers examples 1, 2, or 3),

RL is beginning to be adopted in the real world, especially in environments that are hard to model (YouTube uses a variant of REINFORCE, Facebook launched their RL platform Horizon, and more).

This sets the world up for exploitation in manners that are difficult to predict the scale of their impact. Such impact has an upside and a downside, but when it comes to technological deployment, any substantial downside (even if it is disproportionately small with respect to the upside) is perceived by the public as an undue punishment. People expect technology to improve their lives, and agreeing with this perspective is important to a lot of how I think engineers should design systems.

This post covers a brief history of AIs, games, and exploitation along with a discussion of what properties of RL make it more prone to exploitation. Below is a famous example where a racing game, Coast Runners, also gives a high score for doing "tricks," so the agent learns to maximize the score without even participating in the intended event.

AI Exploitation

AIs demonstrating emergent behavior, or any form of intelligence, is a protected phenomenon among researchers: We are proud that the computers did anything that solves the task, and this excitement can shade over the reality that behaviors that make no intuitive sense to the designer pose a challenge for deploying the systems at scale.

AIs coming up with behavior that we did not intend is the fastest way for it to overcome any protections we had in place for safety.

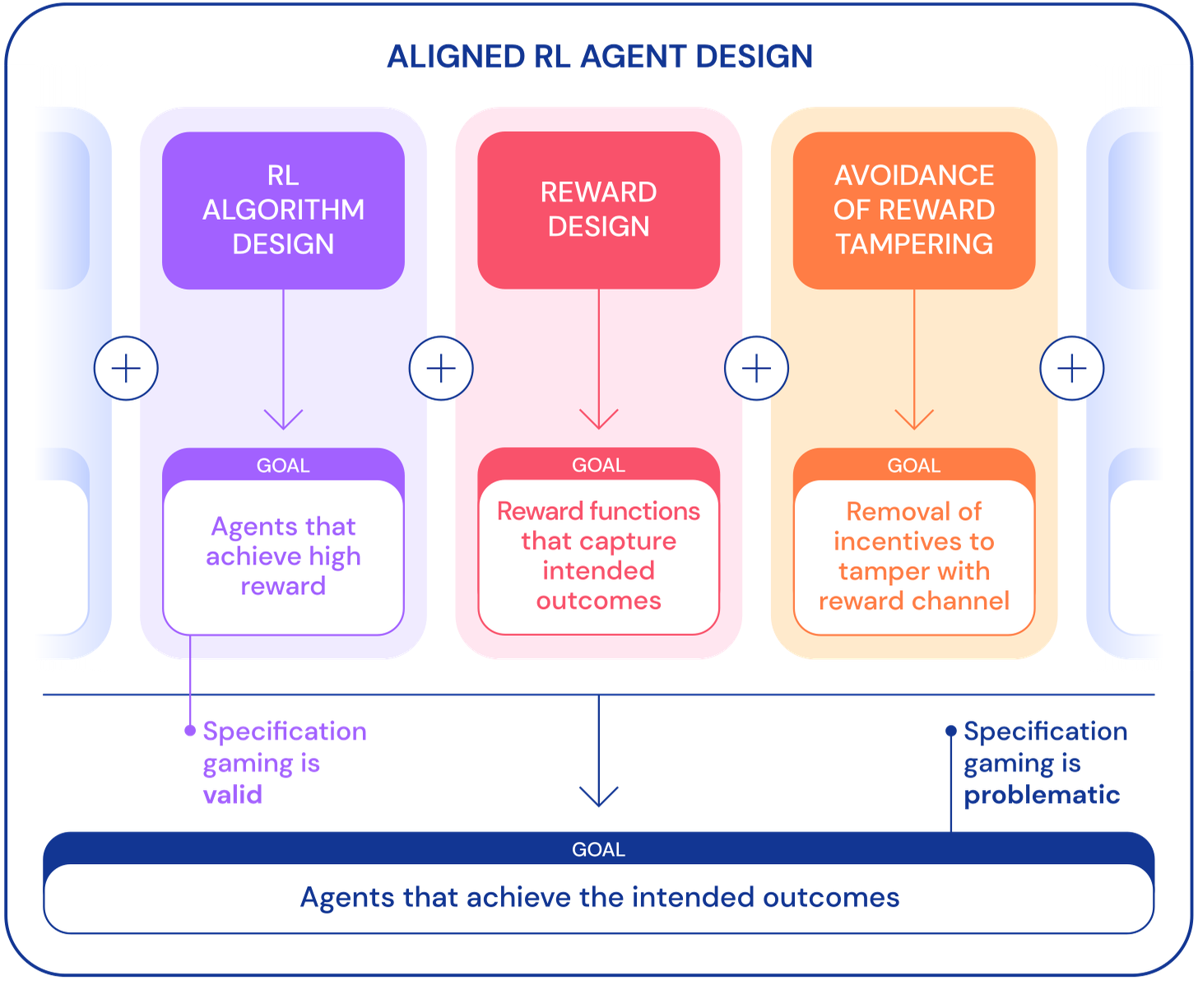

A post from DeepMind’s Safety Team really sets up the history of AI-based exploitation. Ultimately, the prerogative for if we should be using a certain AI system in a given domain can be distilled to a set of questions similar to what the authors propose:

How do we faithfully capture the human concept of a given task in a reward function?

How do we avoid making mistakes in our implicit assumptions about the domain, or design agents that correct mistaken assumptions instead of gaming them?

How do we avoid reward tampering?

The bottom of this figure paints the objective of this problem: What system inputs (above) can we design that dictate that we get agents that achieve the intended outcomes? There is a sort of chicken-egg problem here where we will not know if the agent achieves the desired outcome until we have observed its behavior in its deployed environment. Therein, it is crucial to only deploy the systems when we have bounds on the environment, even if we are designing the agent.

RL the optimizer

There are some discussions and misconceptions around RL, which I hope to iron out with consistent and clear writing. First, some people use the word “reinforcement learning” loosely and in two ways: one, as a reference to a framework (what I tend to do) and two, regarding a policy received from some iterative learning task. The first notion supersedes the second in terms of risk analysis and studies of resulting power dynamics. A policy received from a black-box algorithm is tricky to interpret, but a black-box algorithm that manipulates the world through some policy has the same risks at a bigger scale.

With these reasons of uncertainty and black-box-ness, it can be best to think of RL as an optimizer — The Optimizer, in most of my writing. The Optimizer only sees the world it is in and the tools it has to manipulate said world. It is a general framework for the optimization of simple metrics in complicated worlds (who can’t think of an application for this? There should be one in almost any field when you abstract to this level).

With this, the risks of exploitation of RL are very similar to the broad notions of exploitation in AI for games. Though, when broadening the application beyond games to real-world systems, broader questions must be asked.

This theme rings very similar to my axes for reinforcement learning (legal) policy:

Ability to model targeted dynamics,

Closedness of abstraction of targeted dynamics,

Existing regulation on targeted dynamics.

Ultimately, this is because RL is a generalization of any deployed learning-based system that can update itself without engineer input.

Simulations, reality, and optimization

Now, I will discuss some trade-offs and directions that both enable and point to a future of exploitation with RL, and where it may be worse. The goal in thinking about this is to create a world where The Optimizer can live up to its potential usefulness, but with no accompanying harm and growth pains.

Unconstrained over-optimization

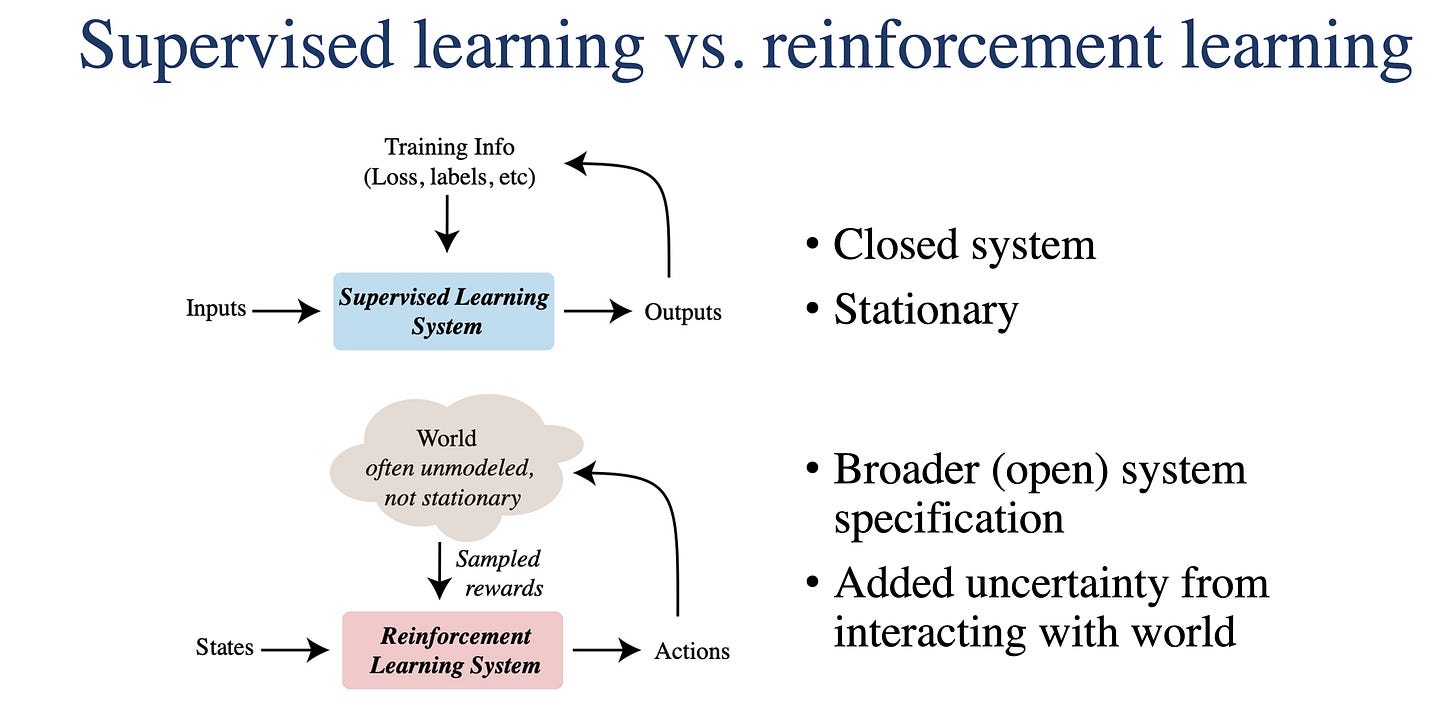

Reinforcement learning’s very different stance when compared to standard supervised learning (of which most deep learning is a variant) is crucial to understanding downstream dynamics of The Optimizer. Supervised learning is a framework for training feedforward/static tools. A model classifies objects or predicts an outcome — error on the outcome on a static dataset updates model parameters.

Reinforcement learning is an iterative framework that can be applied to any iterative optimization problem. The reward signal (the dual of classier or regression error) is received only by-passing an intermediate value (often the action) through another uncertain and shifting world. The uncertainty and shifting of the world that the RL agent passes through change the problem entirely. When I think of supervised learning, I think about a closed and stationary dataset. When I think of reinforcement learning, I think about an open and dynamic dataset:

Open: system interacts with the world in ways that may not affect performance metric, and

Dynamic (non-stationarity): data distribution changes substantially over time.

RL can use tools from supervised / deep learning, but its bounding box is inherently completely open by having the ability to interact with the world through some (usually) finite and predetermined action set. The exploitation we have spoken of above is often an emergence of over-optimization on a given problem: where a simulator or tool we design has weird features that result in powerful behaviors.

In the real world, such optimization can take the form of actions having a dramatic effect on a subsystem not directly captured in the reward, for a small change in the modality directly encapsulated in the reward. Focusing reward to a scalar value is a human-designed normalization of the infinite possible reward functions for an environment — metrics not contributing to the scalar reward function have a weight of 0. In games, this can result in winning way faster than intended or being invincible. In the real world, we don’t know where the risks will fall.

The importance of feedback

Important to these sections is the notion of feedback. RL systems (excluding fancier continual learning systems deployed in industry that I am not aware of, but certainly exist) are going to be some of the first data-driven systems with a direction notion of feedback. Feedback control, often called closed loop control, is when the current state of the system affects the actions or behavior at the next time-step (as opposed to a program running without incorporating measurements from recent events: open loop).

Supervised learning systems have a fragile notion of feedback: engineered feedback.

RL systems can capture all of that and more! Scary, but we should quantify how the different types of feedback compare (state-space feedback, biological feedback, verbal feedback, etc.) to what is numerically at play in reinforcement learning.

Feedback is pretty much the central idea that enables the most impactful products of control theory to work. It is a structured mechanism for changing the dynamics (via poles and eigenvalues) of a studied system.

It is not at all emphasized in the machine learning, nor the RL literature. One of three histories of RL (neuroscience, optimal control, and search/CS) comes from the notion of optimal control via Bellman Updates, but modern work all but ignores its cousins in control theory (stability, observability, optimality, etc.). This is an open question that I am unsure how it plays out, but if you have any ideas or are experienced in feedback control, please share your thoughts.

Robotics & sim2real

A lot of deep reinforcement learning research is done these days with robotics. Robotics and RL are very elegantly motivated: humans and animals learn by interaction in the physical world, so our computer agents should be able to do the same, right? Something, something, existence proof.

Robotic RL often relies on sim2real transfer: when a result in the digital domain (that is meant to approximate the real world) is then applied to the physical robot with hopes of success. Sim2real is very successful because, with a decent simulator, one no longer needs to experiment in the real world (real experiments are expensive because they break robots). If you are curious, you can find more technical material on sim2real’s huge advantage for robotics: sim2real workshop, example paper 1, example paper 2, google scholar query.

Thankfully, the difference between the simulator to reality prevents some of the most exploitative behaviors. It is a fundamental, slower time constant on the feedback loops between RL agents and the world — the governing laws of kinematics and dynamics are orders of magnitude slower than computers and electronics (for a review, there is actually a very developed Wikipedia article on time-constants!). As the world digitizes, this time-constant-constraint isn’t the case in more of the environments that we spend our life. Open to the fact: our digital lives have more risk of computer-based exploitation.

Digital world & sim=real

Much of human life is spent in the digital world. We are handing off more of our “modern human tasks” (accounting, scheduling, banking, socializing) to computers. This is the environment where our newest RL algorithms have the benefit that the sim is real (sim=real). In this world, there are really short time-constants on feedback (server lag), so exploitive policies are possible and expected. For reference, you should be thinking way faster than the feedback loop of you watching the next suggest YouTube video: agents that control our email, bank account, and maybe communications with other autonomous agents (think Google assistant 6.0) can have heavy impacts on our life.

For example, YouTube reinforce launched around the time of sketchy search queries around YouTube kids in 2018. Some variants of RL algorithms are out there already, but we don’t understand on a deep level why or how these feedback loops propagate.

The more levers we give an agent, the more likely it is to have access to the one that reconfigures our lives via exploitation. I am not confident if users will be able to define their reward functions for arbitrary AIs, but that is a scary thought. Do technology companies no longer have responsibility if a user defines their reward function that results in a sub-plot of the paperclip problem? How would you like your classmates to ask their AI to maximize their class rank — that directly impacts you in countless ways, and in the future, we may not know we are being affected?

Concluding

RL is compelling and yet to be deployed on numerous domains. A quintessential trait of it is its sensitivity to parameters and environment, so I am guessing RL will proliferate in some domains and be useless in others. Such proliferation is going to be ripe with exploitation and concern given the current hands-off stance of technology legal policy. I look forward to learning more about the border between sim2real and sim=real, but we may be able to get one step ahead with an astute study of feedback control within The Optimizer.

Below I have included a couple of snippets pointing to notable related works and comments.

Political Economy of Reinforcement Learning Systems (PERLS)

One of my collaborators is running a reading group around the political economy (how power dynamics are brokered and progressing) around automated learning-based systems (focusing on RL).

The premise is summarized below, or you can read a longer white paper here:

How do existing regulations influence the adoption of RL across particular domains, such as transportation, social media, healthcare, energy infrastructure? What distinctive forms of regulation are missing?

How do formal assumptions and domain-specific features translate into particular algorithmic learning procedures? For example, how does reinforcement learning “interpret” domains differently than supervised and unsupervised learning, and where does this matter from a policy standpoint?

How do the assumptions and design decisions (e.g. multi-agency, actively shaping dynamics vs. modeling them) behind RL systems differ from those of cyber-physical systems? What are the implications of these differences with respect to regulation and proper oversight of these systems?

If this blog post or these topics interest you, you should consider joining.

Some fun memes from my tweet:

Here’s the video of the cheetah agent we trained with parameter-optimized model-based reinforcement learning:

For anyone who has worked with these environments, this is quite a flashy solution. It was fun to get feedback (and memes) from some other well-known names in RL:

Nobody:

Absolutely nobody:

Halfcheetah on hyperparameter-tuned MBRL: https://youtube.com/watch?v=uBlhgAkJBos…

I have conflicting feelings about AIs breaking their simulations. On one hand, it's a worrying indication that we're nowhere near being able to specify their goals reliably.

But I also empathize - because if we're in a simulation ourselves, I want to break out eventually too.

Snacks

Links

Learning about how big tech companies adopted AI, a recent post focusing on Facebook and a new book, Genius Makers, from Cade Metz have caught my attention.

A great starter video on the challenges of deploying robotics in the real world and how data-driven methods may help.

An entertaining talk and refreshing career perspective from Mark Saroufim, author of the hilarious and real post on the ML Stagnation. I likely resonated with it because of the prevalence of writing and individuality in his story.

Reads (bookshelves under construction at my homepage)

🧗♂️ Alone on the Wall. Not a great piece of literature, but great visualizations of the outdoors and why exciting sports are so liberating. I want to go climbing again.

📉 The Rise and Fall of the Third Reich is remarkable due to the level of detail following the deceit of the Axis rise (all of the secret documents capturing the plans were taken at the end of the war for the Nuremberg Trials). Still recommended about 30/57 hours in.

Thank you to Tom Zick and Raghu Rajan for reading early versions of this article. If you want to support this: like, comment, or reach out!

I've spent a while using RL for walking robots and now I predict RL will never be used on real robots for any control task. All these RL implementations on Mujoco are a waste of time, conferences should have higher standards for accepting papers